|

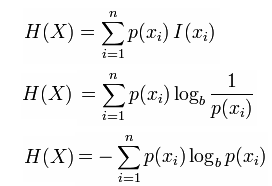

Real probabilities - proves that Shannon's entropy is the only function that has the three properties, if the events' probabilities were real numbers.Rational probabilities - proves that Shannon's entropy is the only function that has the three properties, if the events' probabilities were rational numbers.For any N-point discrete probability distribution for which the Rnyi. Equal probabilities - proves that Shannon's entropy is the only function that has the three properties, if the events' probabilities were equal. Relations between Shannon entropy and Rnyi entropies of integer order are discussed.The three properties - explains what are the three properties that every function for the amount of information, must have.The solution - introduces Shannon's entropy.The problem - states what was the problem that Shannon tried to solve.Each clip is accompanied by exercises or a quiz that let you deepen your understanding. There are six short clips in this miniMOOC. This function become to be known as, Shannon's entropy. Instead of giving a definition, he claimed that any function that measures information must have three properties, and proved that there is a single function that meets these three properties. We shall call H - pi logpi the entropy of the set of probabilities p1.,pn. Shannon started by asking what is " information" and how it can be measured. Let’s understand the concept of Shannon’s Entropy. Why should deep learning be any different It is highly used in information theory (the variant of entropy that’s used there is Shannon’s Entropy) and has made way into deep learning ( Cross-Entropy Loss and KL Divergence) also. The Shannon entropy equation provides a way to estimate the average minimum number of bits needed to encode a string of symbols, based on the frequency of the. It was made for undergraduate students, but we believe that anyone with a basic knowledge in calculus and algebra can get over the math in this miniMOOC. Entropy forms the basis of the universe and everything in it. Rivki Gadot (Open University, LevAcademic Center) & Dvir Lanzberg (the lecturer). A 34, 10123 (2001).This miniMOOC teaches the math behind Shannon's entropy. J. Havrda and F. Charvat, Kybernetica 3, 30 (1967). Shannon entropy is defined for a given discrete probability distribution it measures how much information is required, on average, to identify random.Topsøe, Entropy and index of coincidence, lower bounds, preprint, Copenhagen, 2003. Sanders, Bounds on entropy, preprint, arXiv:quant-ph/0305059. Schlögl, Thermodynamics of chaotic systems ( Cambridge University Press, Cambridge, 1993 ). P. Harremoës and F. Topsøe, IEEE Trans. Shannon Entropy’s first full-length release, Out There Ideas, will be released June 16th on all major platforms.Pipek, On Rényi entropies characterizing the shape and the extension of the phase space representation of quantum wave functions in disordered systems, preprint, arXiv:cond-mat/0204041.

A. Białas, W. Czyż and A. Ostruszka, Acta Phys.Ekert, Direct estimation of functionals of density operators by local operations and classical communication, preprint, arXiv:quant-ph/0304123. Arndt, Information Measures: Information and its Description in Science and Engineering ( Springer, Berlin, 2001 ). Kapur, Measures of Information and Their Applications ( John Wiley & Sons, New York, 1994 ). 1960, On measures of entropy and information 1 (University of California Press, Berkeley, 1961) p. Khinchin, Mathematical Foundations of Information Theory ( Dover, 1957 ).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed